Adaptive Rate Limiting for Carrier Integration: Token Bucket vs Leaky Bucket Algorithms That Survive 2026's Multi-Carrier Migration Crisis

The carrier integration landscape faces a perfect storm in 2026. USPS Web Tools shut down on January 25, 2026, while FedEx's remaining SOAP-based endpoints will be fully retired in June 2026. Meanwhile, USPS's new API structure defaults to 60 requests per hour, a rate limit that's unsustainable for most businesses.

Your existing rate limiting logic won't survive this migration. Static rate limiting approaches that worked in 2020 are failing spectacularly in 2025's complex API ecosystem. The problem isn't just volume. Bulk processing scenarios create cascading failures that simple rate limit headers don't prepare you for.

Understanding Carrier-Specific Rate Limiting Complexity

The 2026 migration reveals how carrier rate limits interact in ways that break traditional assumptions. DHL provides 250 calls per day with a maximum of 1 call every 5 seconds upon initial request, while USPS's new limit drops from 6,000 requests per minute to just 60 per hour—essentially one validation request per minute, making many workflows impossible.

These aren't just numbers to manage. DHL bases minimum daily calls on average monthly shipping volume and expects calls distributed evenly throughout the day, while UPS tracking starts with 250 calls daily at 1 call per 5 seconds. Multi-tenant environments face compounded risk because rate limits don't just add up—they interact unpredictably when concurrent workflows hit the same carrier simultaneously.

Carrier APIs also return different signals when limits are exceeded. Some return 503 Service Unavailable during maintenance windows, others send 502 Bad Gateway when upstream systems fail. Your rate limiting logic must distinguish between "slow down" and "try again later" signals to avoid unnecessary backoff cascades.

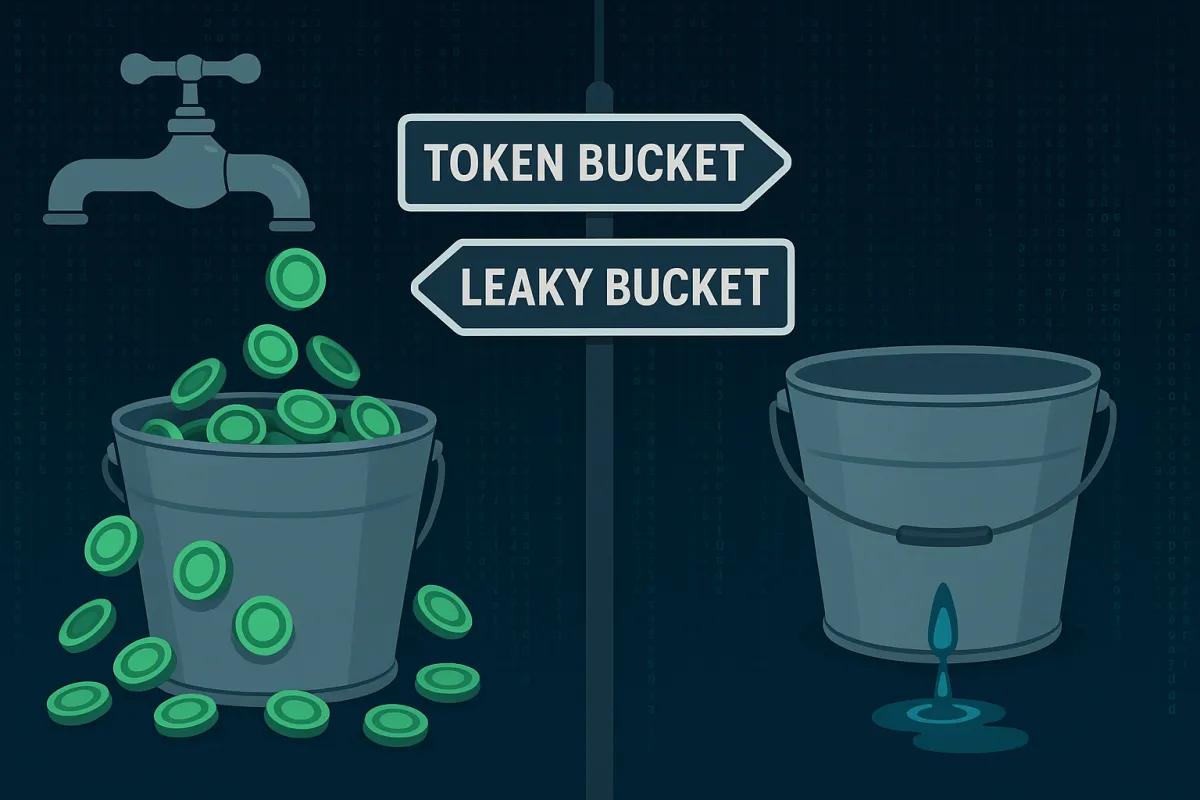

Token Bucket Algorithm for Carrier Integration

The token bucket algorithm manages burst traffic by maintaining a bucket of tokens that refill at a consistent rate. Tokens replenish based on the refill rate, with a HASH containing two fields: tokens (current token count) and last_refill (timestamp of the last refill), ideal for API rate limiting where you want to allow short bursts.

This approach proves particularly valuable for carrier integration during the 2026 migration crisis. When FedEx's REST API becomes your only option, token buckets allow controlled bursts during label generation peaks while maintaining the steady rate carriers expect. The refill-check-consume sequence runs inside a single EVAL call, reading the hash, computing new tokens based on elapsed time, and conditionally decrementing—all atomically.

Implementation requires careful attention to carrier-specific patterns. Here's a Redis Lua script optimized for multi-tenant carrier scenarios:

local key = KEYS[1]

local capacity = tonumber(ARGV[1])

local refill_rate = tonumber(ARGV[2])

local requested = tonumber(ARGV[3])

local now = tonumber(ARGV[4])

local bucket = redis.call('HMGET', key, 'tokens', 'last_refill')

local tokens = tonumber(bucket[1]) or capacity

local last_refill = tonumber(bucket[2]) or now

local elapsed = now - last_refill

local tokens_to_add = elapsed * refill_rate

tokens = math.min(capacity, tokens + tokens_to_add)

if tokens >= requested then

tokens = tokens - requested

redis.call('HMSET', key, 'tokens', tokens, 'last_refill', now)

redis.call('EXPIRE', key, 3600)

return {1, tokens}

else

return {0, tokens}

endToken buckets work well when carriers like UPS or FedEx allow reasonable burst capacity but enforce longer-term average rates. Platforms including Cargoson, MercuryGate, and project44 leverage token bucket strategies to handle mobile apps that batch requests on launch or APIs with naturally bursty traffic patterns.

Leaky Bucket Algorithm for Carrier Integration

The leaky bucket algorithm enforces strict output rates, processing requests at a constant rate regardless of input bursts. Determining how to queue requests is up to you. APIs often choose to respond immediately and include a Retry-After header in the response, avoiding server-side queueing.

Leaky buckets prove essential for carriers like DHL that expect evenly distributed requests throughout the day. DHL allows up to 10 fetches per shipment per day and expects these calls done in an even way throughout the day. This requirement breaks with traditional burst-then-quiet patterns many systems assume.

Two implementation modes serve different carrier integration needs:

Policing Mode provides immediate allow/deny decisions with minimal operational complexity, suitable for carriers with hard limits where overflow traffic gets dropped immediately. Shaping Mode queues excess requests to maintain steady output rates, ideal for carriers like DHL that prefer consistent request patterns over time.

Redis implementation for leaky bucket policing:

local key = KEYS[1]

local capacity = tonumber(ARGV[1])

local leak_rate = tonumber(ARGV[2])

local now = tonumber(ARGV[3])

local bucket = redis.call('HMGET', key, 'level', 'last_leak')

local level = tonumber(bucket[1]) or 0

local last_leak = tonumber(bucket[2]) or now

local elapsed = now - last_leak

local leak_amount = elapsed * leak_rate

level = math.max(0, level - leak_amount)

if level < capacity then

level = level + 1

redis.call('HMSET', key, 'level', level, 'last_leak', now)

redis.call('EXPIRE', key, math.floor(capacity/leak_rate) + 1)

return {1, capacity - level}

else

return {0, 0}

endEnterprise platforms handle leaky bucket complexity differently. Blue Yonder implements sophisticated queueing mechanisms for steady carrier throughput, while Manhattan Active includes circuit breaker patterns with sustained 429 responses. Cargoson maintains different retry strategies for different types of carrier responses, alongside nShift and Descartes providing enterprise-grade queue management.

Hybrid Approaches for Multi-Carrier Environments

Real production environments require combinations of algorithms to handle diverse carrier requirements. Use a token bucket for per-user limits and a fixed window for global limits. Layering algorithms gives you fine-grained control over different types of traffic.

The 2026 migration crisis creates scenarios where hybrid approaches become necessary rather than optimal. When USPS limits force you to queue address validations while FedEx REST APIs allow label bursts, your rate limiting architecture must handle both patterns simultaneously without cross-contamination.

Adaptive algorithms that adjust based on carrier behavior patterns provide the most robust solution. Monitor multiple signals: error rates lowering limits when failures exceed 5%, response time adjusting concurrent requests if latency crosses 500ms, and using adaptive algorithms like token bucket combined with sliding window counters.

Multi-tenant isolation becomes critical when one tenant's batch job could exhaust rate limits for all customers. Implement tenant-specific buckets with overflow protection:

local tenant_key = "rate_limit:tenant:" .. tenant_id .. ":carrier:" .. carrier

local global_key = "rate_limit:global:carrier:" .. carrier

-- Check tenant-specific limits first

local tenant_allowed = check_token_bucket(tenant_key, tenant_capacity, tenant_rate)

if not tenant_allowed then

return {0, "tenant_limit_exceeded"}

end

-- Check global carrier limits

local global_allowed = check_leaky_bucket(global_key, global_capacity, global_rate)

if not global_allowed then

return {0, "carrier_limit_exceeded"}

end

return {1, "allowed"}MercuryGate and Blue Yonder implement exponential backoff with maximum retry limits, while SAP TM includes sophisticated queueing mechanisms. Cargoson, project44, and Descartes each provide different approaches to multi-tenant carrier rate limiting, with some emphasizing isolation and others focusing on load balancing across available capacity.

Production Implementation Patterns

The 2026 migration crisis demands production-ready rate limiting that survives real-world failure modes. Implement throttling by slowing down requests rather than blocking entirely, using delayed request processing or queue systems. Test circuit breaker patterns with sustained 429 responses and verify that jitter implementation prevents thundering herd problems.

Smart rate limiting monitors multiple signals beyond simple request counts. When carrier error rates exceed 5%, reduce concurrent request limits. If response latency crosses 500ms, implement adaptive backoff. Use sliding window counters to detect sustained problems versus temporary spikes.

Distributed rate limiting with Redis requires atomic operations to prevent race conditions. The refill-check-consume sequence runs inside a single EVAL call, ensuring the script reads the hash, computes new tokens based on elapsed time, and conditionally decrements—all atomically.

Observability becomes crucial during the migration period. Track rate limit hit rates per carrier, tenant request patterns, and queue depths. Alert when any carrier shows sustained rate limiting or when tenant isolation breaks down. Monitor carrier-specific metrics: DHL's even distribution requirements, USPS's hourly quotas, and FedEx's burst tolerance patterns.

Production deployments benefit from the recommended stack: caching + SDK retry + rate limit increase request handles 80% of use cases with minimal code changes. For higher throughput requirements, add proxy layers that abstract carrier complexity from application logic.

Testing and Validation Strategies

Traditional ping tests won't prepare you for the 2026 migration reality. During stress testing across DHL, UPS, and FedEx APIs simultaneously, each carrier's rate limiting behaved differently under sustained load. DHL's sliding window approach allowed burst capacity recovery within minutes, while UPS's fixed window required waiting full reset periods. FedEx showed the most aggressive throttling but provided clearer rate limit headers.

Load testing must replicate authentic request distributions, geographic origins, and payload variations. Create test scenarios with gradual ramp-up followed by sudden volume increases—mimicking Black Friday traffic patterns or batch processing attempting to validate 500 addresses simultaneously.

Validate your rate limiting logic against carrier-specific behaviors. Test how your system handles DHL's requirement for evenly distributed requests versus UPS's allowance for burst traffic. Verify that USPS's 60-request hourly limit doesn't cascade into failures across other carrier integrations.

Performance benchmarking should compare algorithm effectiveness across different traffic patterns. Token buckets excel during mobile app launch scenarios where requests naturally batch. Leaky buckets provide predictable behavior when carriers like DHL expect steady request rates. Hybrid approaches handle the complex reality of multi-carrier environments where different APIs have incompatible rate limiting expectations.

The 2026 carrier API migration creates an unprecedented stress test for rate limiting systems. Your choice: spend months debugging rate limit edge cases and carrier-specific retry logic, or invest in adaptive rate limiting that survives whatever 2026 brings. The companies that thrive won't be those with perfect initial implementations—they'll be those who built systems resilient enough to handle the unknown carrier requirements still coming.