Preventing Cascading Failures in Multi-Carrier Integration: When Rate Limiting Becomes Your Worst Enemy

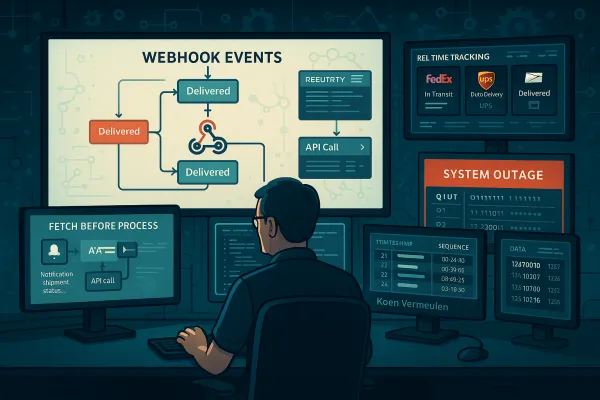

When the busiest ordering week arrives and your carrier experiences an outage, you're left unable to calculate shipping rates and customers aren't impressed. This scenario plays out repeatedly across carrier integration platforms, but the real culprit isn't usually a simple outage. The problem starts when upstream carrier APIs implement aggressive rate limiting during peak periods, creating the perfect storm for cascading failures that can bring down entire shipping operations.

Modern multi-carrier integration platforms face a unique challenge: temporary service degradations can turn minor issues into major outages as thousands of failed requests are instantly retried. When FedEx or UPS throttles their APIs during Black Friday traffic, your carefully designed system can collapse like dominoes, taking down checkout flows, label generation, and tracking updates across all your tenants.

The Anatomy of a Carrier Integration Catastrophe

Picture this: Black Friday morning, 9 AM GMT. Traffic to your multi-tenant shipping platform spikes to 50x normal volumes as European retailers process their biggest sales day. FedEx introduces transaction quotas and rate limits for their API, with thousands of customer applications making requests, but your distributed system isn't prepared for what happens next.

Your five application servers, each thinking they have access to FedEx's 1,000 requests per minute quota, collectively send 5,000 requests. The API responds with 429 status codes. Your retry logic kicks in immediately. Within seconds, you're sending 25,000 requests as each failed request triggers an exponential backoff that isn't coordinated across servers.

Here's where carrier rate limits differ fundamentally from typical API throttling. Sometimes carriers need to take their API offline to perform updates or maintenance, typically letting you know about these planned outages ahead of time, but seasonal traffic patterns create dynamic limits that aren't communicated in advance. When customers can't see rates, cart abandonment increases by 35% according to e-commerce data, driving retry storms as desperate applications attempt to maintain service levels.

The compounding effect cascades through your architecture. Your Redis cluster, managing rate limit counters for 10,000 tenants, starts experiencing connection pool exhaustion. A rapid succession of client requests may exhaust the preallocated quota of SNAT ports if these ports aren't closed and recycled fast enough. What started as FedEx rate limiting has now become a platform-wide outage affecting UPS, DHL, and postal service integrations.

Multi-Tenant Amplification Effects

Multi-tenant shipping platforms like nShift, ShipEngine, and Cargoson face an amplification problem that single-tenant systems don't experience. When tenant isolation breaks down during rate limit failures, a single tenant's retry storm can consume shared connection pools, causing cross-tenant failures.

Your connection pooling strategy, designed for efficiency, becomes a bottleneck. Each Redis shard connection is shared across multiple tenants. When one tenant triggers aggressive retries due to carrier rate limiting, they saturate the connection pool. Other tenants, even those using completely different carriers, start experiencing timeouts as they can't acquire connections to update their rate limit counters.

Circuit breakers that should isolate failures become shared failure points. Your FedEx circuit breaker trips, but your fallback logic routes traffic to UPS. The increased UPS traffic, concentrated from multiple tenants simultaneously, hits UPS's rate limits. Now both carriers are down, and your alternative carrier failover is triggering DHL rate limits.

The Distributed Rate Limiting Dilemma

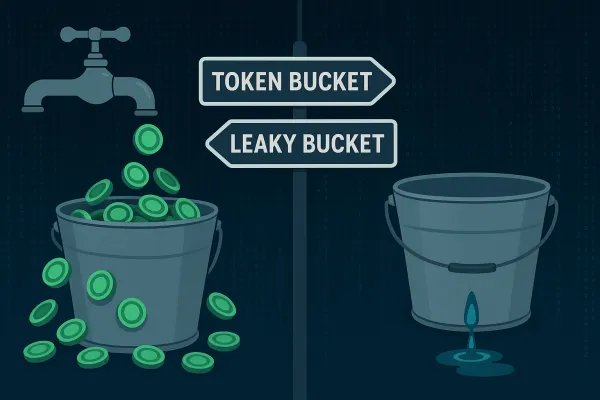

The fundamental challenge lies in coordinating rate limits across distributed instances. The idea is to split the rate limit of a provider API into parts which are then assigned to consumer instances, allowing them to control their request rate by themselves. This sounds elegant until you face the reality of dynamic scaling, network partitions, and variable traffic patterns.

When using a cluster of multiple nodes, we might need to enforce a global rate limit policy. Because if each node were to track its rate limit, a consumer could exceed a global rate limit when sending requests to different nodes. The greater the number of nodes, the more likely the user will exceed the global limit.

Traditional approaches like sticky sessions create fault tolerance problems. When one of your five application servers fails, the load balancer redistributes traffic, but your rate limiting calculations are now wrong. The remaining four servers still think they each have 200 requests per minute (1,000/5), but they're actually handling the load of five servers.

What we need is a centralized and synchronous storage system and an algorithm that can leverage it to compute the current rate for each client. An in-memory cache (like Memcached or Redis) is ideal. But centralized coordination creates its own problems.

Race Conditions in Rate Limit Enforcement

The naive approach uses a "get-then-set" pattern: retrieve the current counter, increment it, check if it exceeds the limit, then store it back. This issue happens when we use a naive "get-then-set" approach, in which we retrieve the current rate limit counter, increment it, and then push it back to the datastore. This model's problem is that additional requests can come through in the time it takes to perform a full cycle of read-increment-store, each attempting to store the increment counter with an invalid (lower) counter value. This allows a consumer to send a very large number of requests to bypass the rate limiting controls.

For carrier integration platforms handling thousands of requests per second, these race conditions aren't theoretical. When FedEx's API starts responding slowly (increasing latency from 100ms to 2 seconds), the time window for race conditions expands dramatically. Multiple application servers reading the same counter value and all deciding they're under the limit can result in 10x the intended rate limit being applied to the carrier API.

Thanks to Redis's atomic operations using Lua scripts, these solutions ensure precision in rate limiting, but even atomic operations have coordination overhead. One way to avoid this problem is to use some sort of distributed locking mechanism around the key, preventing any other processes from accessing or writing to the counter. Though the lock will become a significant bottleneck and will not scale well. A better approach might be to use a "set-then-get" approach, allowing us to quickly increment and check counter values without letting the atomic operations get in the way.

Circuit Breaker Patterns for Carrier Resilience

Circuit breakers in carrier integration require different thinking than typical microservice patterns. Carriers like FedEx and UPS often implement "soft" rate limiting - they don't immediately cut you off but gradually increase response times. Your circuit breaker needs to detect this degradation before it becomes a hard failure.

Randomized exponential backoff prevents the thundering herd problem that amplifies carrier issues. Instead of all your application servers retrying at exactly the same intervals (1s, 2s, 4s, 8s), you add jitter: (1s + rand(0-0.5s)), (2s + rand(0-1s)), etc. This simple change can reduce peak retry traffic by 40-60%.

The choice between per-carrier and global circuit breakers involves trade-offs. Per-carrier breakers provide fine-grained control - when FedEx fails, you can still serve UPS and DHL requests. But during Black Friday, carriers often fail simultaneously due to correlated traffic patterns. A global circuit breaker might be more appropriate, failing fast on all carrier requests and serving cached rates.

Modern platforms take different approaches to this challenge. Platforms like Transporeon and Blue Yonder implement enterprise-focused per-carrier circuits with sophisticated health scoring, while solutions like Cargoson optimize for SMB use cases with simpler global fallback patterns that prioritize availability over precision.

Implementing Retry Budgets

Retry budgets provide server-wide protection against cascade failures. You allocate a fixed number of retries per time window (e.g., 60 retries per minute across all tenants) and track consumption globally. When the budget is exhausted, new failures fail-fast instead of retrying.

Per-tenant retry budgets prevent noisy neighbor problems but require careful tuning. A large tenant processing 10,000 shipments per hour shouldn't consume the entire retry budget when their carrier integration fails. You might allocate budgets proportionally: enterprise tenants get 40% of retries, mid-market gets 35%, and small businesses share the remaining 25%.

Budget exhaustion becomes an early warning system. When you're consuming retry budgets faster than they replenish, it indicates systemic issues before they become outages. Alert on 80% budget utilization, not on circuit breaker trips.

Graceful Degradation Strategies

During carrier outages, not all requests are equal. Interactive rate shopping from checkout pages needs to be prioritized over background shipment syncing or tracking updates. Selective rate limiting drops background operations while keeping customer-facing functionality alive.

Cached rates become your lifeline during outages. Instead of failing completely, serve rates from your last successful API call with appropriate cache headers indicating staleness. A cached UPS Ground rate that's 30 minutes old is better than no rate at all, especially if you include cache age in the response.

Setting up an alternative live rate carrier will most closely mimic the experience your customers are used to. Using Backup Carrier Rates this way, you'd simply set up a secondary carrier the same way you set up your primary one. Some retailers opt to do this anyway, to give customers several methods to choose from. It's the most surefire way to ensure a smooth, consistent experience, even if one carrier is unavailable.

Multi-Carrier Failover Architecture

Effective failover requires real-time carrier health scoring beyond simple up/down status. Track response times, error rates, and rate limit consumption to build a composite health score. When FedEx's health drops below 70% (high latency, increased 429s), start routing new requests to UPS while honoring existing FedEx rate limit constraints.

Cross-carrier rate limit sharing prevents cascading failures during failover. If FedEx fails and you route all traffic to UPS, you need to ensure UPS can handle the additional load. Pre-negotiated rate limit increases or burst allowances with backup carriers become critical during peak periods.

Enterprise solutions like Oracle Transportation Management and SAP Transportation Management include sophisticated carrier diversity algorithms, while platforms focused on SMB segments like Cargoson implement simpler primary/secondary patterns that balance complexity with reliability needs.

Monitoring and Early Detection

Traditional application monitoring falls short for carrier integration platforms. You need carrier-specific observability that tracks API health, rate limit consumption, and error patterns. Cache failures represent another critical vulnerability. Many rate limiting implementations rely heavily on in-memory or distributed caches for performance. If these caches fail or become unavailable, your system might default to either allowing all requests (risking overload) or denying all requests (causing unnecessary outages). Implement graceful degradation strategies like secondary storage systems, local fallback caches, or circuit breakers that can make reasonable decisions when the primary cache is unavailable.

Volume monitoring becomes complex with millions of API calls across dozens of carriers. Track rate limit consumption as a percentage of available quota, not just raw numbers. A carrier consuming 95% of quota with steady traffic patterns is healthier than one consuming 60% with high variability.

Synthetic monitoring for carrier APIs provides early warning of degradation. Send test requests for common operations (rate shopping, label generation, tracking) every 30 seconds. When synthetic test latency increases by 200% or error rates exceed 1%, trigger pre-emptive circuit breaking before customer traffic is affected.

Platforms like Manhattan Active and FreightPOP invest heavily in proprietary monitoring solutions, while Cargoson balances comprehensive monitoring with operational simplicity appropriate for their market segment.

SLO Design for Carrier Integration

Defining meaningful SLOs when carrier performance varies wildly requires careful thought. A 99.9% uptime SLO is meaningless if your carriers collectively provide 97% availability. Instead, define SLOs around customer experience: "95% of rate requests return within 2 seconds" accounts for carrier variability while focusing on user impact.

Error budgets across multiple carrier dependencies need correlation analysis. When FedEx consumes 50% of your monthly error budget in one day, you might need to be more conservative with UPS failures for the remainder of the month. Error budget allocation by carrier (weighted by usage volume) provides better incident response guidance.

Multi-carrier outages require different response patterns than single-carrier issues. When two or more carriers fail simultaneously, your incident response should immediately escalate to serve cached rates and activate backup carrier agreements rather than attempting to restore failed carriers.

The key insight for preventing cascading failures isn't perfect rate limiting - it's building systems that degrade gracefully when rate limiting inevitably fails. If that same usage had ramped up gradually over a few minutes, it wouldn't have been a problem: our autoscaling would have kicked in and added enough capacity to handle it. Such high usage only causes issues if its very rapid onset means that autoscaling is unable to react in time. So we decided we needed to introduce an account-wide, global, near-instantaneous message rate limit.

Your next steps should focus on implementing retry budgets and synthetic monitoring before the next peak season. Rate limiting failures are inevitable, but cascading platform outages are preventable with proper architectural patterns and operational discipline.