Production-Grade Idempotency for Multi-Carrier Integration: Surviving OAuth Cascade Failures and Authentication Race Conditions Without Creating Duplicate Shipments

The numbers tell a stark story. API downtime surged by 60% between Q1 2024 and Q1 2025, with average uptime dropping from 99.66% to 99.46%. For carrier integration teams, this means something worse than network timeouts: duplicate shipments and inventory mismanagement when retry logic fails.

73% of integration teams reported production authentication failures after UPS completed their OAuth migration in January 2025. The issue manifested as intermittent 401 responses during peak traffic periods, particularly affecting OAuth token refresh operations. Your retry logic kicks in, but traditional idempotency key systems don't account for authentication cascade failures. Multiple identical shipment requests with different authentication sessions bypass deduplication entirely.

Authentication-Aware Idempotency: Beyond Request-Level Keys

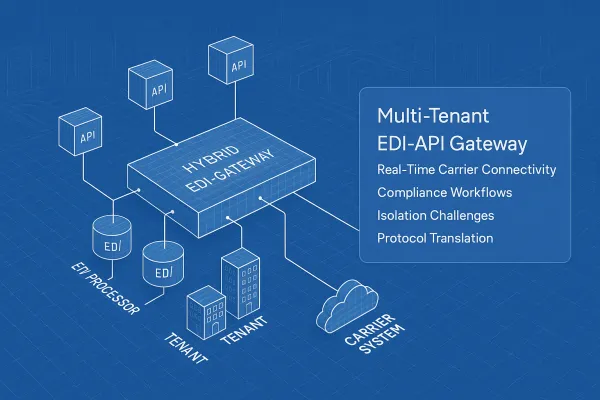

Standard idempotency implementations scope keys to individual API calls. When your Cargoson integration needs to refresh an OAuth token mid-flow, the subsequent retry uses a different authentication session while carrying the same idempotency key. To the carrier's API, this appears as a completely new request.

The fix requires authentication-aware idempotency that tracks business operations across auth boundaries. Instead of keying on `{request_id}`, use `{tenant_id}:{business_operation_id}:{carrier}:{operation_type}`. The service creates an idempotent "session" for this request keyed off the customer identifier and their unique client request identifier.

Here's a PostgreSQL schema that survives authentication transitions:

CREATE TABLE idempotency_store (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

business_key VARCHAR(255) UNIQUE NOT NULL,

tenant_id UUID NOT NULL,

operation_type VARCHAR(50) NOT NULL,

carrier VARCHAR(50) NOT NULL,

request_hash CHAR(64) NOT NULL,

response_status INTEGER,

response_body JSONB,

auth_session_id VARCHAR(255),

created_at TIMESTAMP DEFAULT NOW(),

expires_at TIMESTAMP NOT NULL,

processed BOOLEAN DEFAULT FALSE

);

CREATE INDEX idx_idempotency_lookup

ON idempotency_store (business_key, processed, expires_at);

The `auth_session_id` tracks which authentication context processed the request, but the `business_key` remains constant across token refreshes. When UPS returns a 401, your system can retry with fresh credentials while maintaining the same business operation identity.

Handling Partial Success Across Authentication Boundaries

UPS might handle 100 requests per minute reliably, while FedEx starts rate-limiting at 75. DHL's European endpoints fail more subtly: authentication works, rate requests succeed, but label generation times out after 45 seconds. Standard retry logic assumes the entire operation failed and resubmits everything, but DHL's systems may have processed the first label request successfully.

Track operation lifecycle through state transitions that understand partial completion:

CREATE TYPE operation_state AS ENUM (

'initiated',

'auth_validated',

'rates_obtained',

'label_requested',

'label_generated',

'completed',

'failed',

'auth_expired_retry'

);

ALTER TABLE idempotency_store

ADD COLUMN current_state operation_state DEFAULT 'initiated',

ADD COLUMN state_transitions JSONB DEFAULT '[]'::jsonb;

When authentication fails at the label generation stage, your system knows not to re-request rates. The retry targets only the failed operation segment, preventing duplicate processing upstream.

Circuit Breaker Patterns for Authentication Cascade Failures

October's failures demonstrated why treating 429 responses like outages creates unnecessary panic. When DHL returns a 429, your system should implement exponential backoff with jitter, not immediately failover to backup carriers.

Authentication failures require different circuit breaker thresholds than capacity issues. A 401 during token refresh might indicate a temporary OAuth provider hiccup, while consecutive 401s suggest credential corruption requiring manual intervention.

class AuthAwareCircuitBreaker:

def __init__(self, carrier, auth_failure_threshold=3,

rate_limit_threshold=5):

self.carrier = carrier

self.auth_failures = 0

self.rate_limit_failures = 0

self.auth_threshold = auth_failure_threshold

self.rate_threshold = rate_limit_threshold

self.last_auth_failure = None

def record_failure(self, error_code, operation):

now = datetime.utcnow()

if error_code == 401:

self.auth_failures += 1

self.last_auth_failure = now

if self.auth_failures >= self.auth_threshold:

# Trigger auth session reset, not failover

return CircuitState.AUTH_RECOVERY_NEEDED

elif error_code == 429:

self.rate_limit_failures += 1

backoff_time = min(300, 2 ** self.rate_limit_failures)

return CircuitState.BACKOFF_REQUIRED, backoff_time

return CircuitState.CONTINUE

Monitor authentication transition periods specifically. A reactive approach means the job fails partway through, requiring retry logic, idempotency guarantees, and partial-state recovery - all because a token expired predictably. Track request success rates during OAuth refresh windows and alert when authentication failures correlate with increased duplicate processing.

Multi-Carrier Failover Without Duplicate Creation

Like any business, website, or service, carriers like UPS, USPS and FedEx are not immune to issues. During an outage, no one can access rates from a carrier. Enterprise TMS systems often implement carrier failover, but the challenge lies in ensuring failover doesn't create duplicate shipments across different carriers.

Design state machine patterns that track shipment lifecycle across all carriers. When UPS fails and you failover to FedEx, the idempotency system must recognize this as the same business operation, not separate requests requiring independent processing.

business_key = f"{tenant_id}:shipment:{order_id}:{attempt_sequence}"

# Original request to UPS

ups_key = f"{business_key}:ups:create_label"

# Failover to FedEx uses same business context

fedex_key = f"{business_key}:fedex:create_label"

# Both operations share the same shipment_creation_id

# Only one can succeed at the business logic level

The implementation requires cross-carrier coordination. Platforms like Cargoson, nShift, EasyPost, and ShipEngine handle this by maintaining business-level idempotency above the individual carrier API calls. Their success rates are higher precisely because they've already debugged these production failure modes at scale.

Monitoring Idempotency Violations in Production

While Datadog might catch your server metrics and New Relic monitors your application performance, neither understands why UPS suddenly started returning 500 errors for rate requests during peak shipping season. Traditional monitoring focuses on HTTP status codes and response times. Idempotency violations manifest as business logic failures that bypass standard health checks.

Implement duplicate detection monitoring that tracks request patterns over sliding windows:

-- Alert when identical business operations succeed multiple times

SELECT

business_key,

COUNT(*) as success_count,

ARRAY_AGG(DISTINCT carrier) as carriers_used,

MIN(created_at) as first_success,

MAX(created_at) as last_success

FROM idempotency_store

WHERE

response_status BETWEEN 200 AND 299

AND created_at > NOW() - INTERVAL '1 hour'

GROUP BY business_key

HAVING COUNT(*) > 1;

Alert when identical business operations generate multiple successful responses within your deduplication timeframe. This catches violations before they impact inventory systems or create billing discrepancies. This intermittent failure pattern appears frequently with carrier APIs. A standard health check might ping an endpoint every minute and report "UP", missing the 30-second windows when actual rate requests fail.

Implementation: PostgreSQL-Based Idempotency with Auth Context

Here's a production-ready implementation that handles authentication-aware idempotency:

class AuthAwareIdempotencyManager:

def __init__(self, db_pool, redis_client=None):

self.db = db_pool

self.cache = redis_client

async def execute_idempotent(self, business_key, operation_func,

request_hash, tenant_id, carrier):

# Check for existing operation

existing = await self._get_existing_operation(business_key)

if existing and existing['processed']:

if existing['response_status'] >= 200 < 300:

return existing['response_body'], existing['response_status']

elif self._should_retry(existing):

# Auth failure or retriable error, proceed with retry

pass

else:

# Non-retriable failure, return cached result

return existing['response_body'], existing['response_status']

# Execute with distributed lock to prevent concurrent processing

lock_key = f"idempotency_lock:{business_key}"

async with self._distributed_lock(lock_key, timeout=30):

# Double-check after acquiring lock

existing = await self._get_existing_operation(business_key)

if existing and existing['processed']:

return existing['response_body'], existing['response_status']

try:

# Record operation start

await self._create_operation_record(

business_key, request_hash, tenant_id, carrier

)

# Execute the actual operation

response_body, status_code = await operation_func()

# Record successful completion

await self._complete_operation(

business_key, response_body, status_code

)

return response_body, status_code

except AuthenticationError as e:

# Record auth failure, allow retry with new session

await self._record_auth_failure(business_key, str(e))

raise

except Exception as e:

# Record general failure

await self._record_failure(business_key, str(e))

raise

def _should_retry(self, existing_op):

# Retry on auth failures or timeouts, not on business logic errors

return (existing_op['response_status'] == 401 or

existing_op['response_status'] == 504 or

existing_op.get('error_type') == 'timeout')

The implementation uses distributed locking to prevent race conditions during OAuth refresh windows. If the network drops exactly after the provider issues the new token but before your database commits the update, your system state is corrupted. The provider has rotated the refresh token, but your application is still holding the old one. The next refresh attempt hits an invalid_grant error. Your application is permanently locked out, and the end user must manually re-authenticate.

Redis Patterns for High-Throughput Scenarios

For carrier integrations handling thousands of requests per minute, PostgreSQL-based idempotency creates database bottlenecks. Use Redis for hot-path operations with PostgreSQL as the durability layer:

class HybridIdempotencyStore:

async def check_operation(self, business_key):

# First check Redis for recent operations (last 5 minutes)

cached_result = await self.redis.hgetall(f"idem:{business_key}")

if cached_result:

return self._deserialize_cached_result(cached_result)

# Fall back to PostgreSQL for longer-term storage

return await self._check_database(business_key)

async def record_operation(self, business_key, result, ttl=300):

# Write to both Redis (for speed) and PostgreSQL (for durability)

pipeline = self.redis.pipeline()

pipeline.hset(f"idem:{business_key}", mapping={

'status': result.status_code,

'body': json.dumps(result.body),

'timestamp': time.time()

})

pipeline.expire(f"idem:{business_key}", ttl)

await pipeline.execute()

# Async write to PostgreSQL (don't block the response)

asyncio.create_task(self._persist_to_database(business_key, result))

This pattern handles the reality that In Q1 2025, that rose to 55 minutes of weekly API downtime while maintaining sub-millisecond idempotency checks for duplicate requests.

When a client sees any kind of error, it can ensure the convergence of its own state with the server's by retrying, and can continue to retry until it verifiably succeeds. This fully addresses the problem of an ambiguous failure because the client knows that it can safely handle any failure using one simple technique.

Building production-grade idempotency for carrier integration requires understanding that authentication failures are fundamentally different from business logic errors. Your system needs to distinguish between "try again with fresh credentials" and "this operation has already been processed successfully." The patterns above prevent duplicate shipments while maintaining the reliability that modern logistics operations demand.