Multi-Tenant Rate Limiting Coordination for Carrier Integration: Preventing Cross-Tenant Cascade Failures During the 2026 API Migration Crisis

USPS's new APIs enforce strict rate limits of approximately 60 requests per hour, down from roughly 6,000 requests per minute without throttling in the legacy system. USPS Web Tools shut down on January 25, 2026, and FedEx SOAP endpoints retire on June 1, 2026. For enterprise teams managing thousands of shipments daily, this creates a perfect storm: forced migrations under hard deadlines while dealing with the new reality of aggressive rate limiting across all major carriers.

The math is brutal. Companies who relied on the default limit of 6000 queries per minute are only now discovering bottlenecks. That's 6,000 times slower than the default USPS used to provide. A batch job that previously processed 10,000 addresses in ten minutes now requires 167 hours. Your rate shopping during order processing? Forget about it during peak hours.

But here's what most integration teams missed: this isn't just a USPS problem. You're building multi-tenant carrier integration systems where dozens or hundreds of tenants share the same carrier quotas. Add rate limiting into the mix and you have a genuine crisis. USPS's new APIs enforce strict rate limits of approximately 60 requests per hour, down from roughly 6,000 requests per minute without throttling in the legacy system.

Traditional rate limiting fails spectacularly in multi-tenant environments. When tenant A burns through the shared USPS quota during their morning batch import, tenant B's real-time checkout validations start failing. Your monitoring shows everything green because the API is responding normally—it's just rejecting 90% of requests with rate limit errors.

The Cross-Tenant Quota Bleeding Problem

Single global rate limiting creates cascade failures across tenant boundaries. Picture this: your middleware serves 50 e-commerce tenants, all sharing one USPS API key with that precious 60-request-per-hour limit. Tenant A (a high-volume electronics retailer) runs their daily address cleanup job at 6 AM, consuming 45 requests in the first hour. Now tenants B through Z face degraded service for the remaining 59 minutes, even though they've done nothing wrong.

The coordination problem gets worse with multiple carriers. USPS limits hit first, so your system fails over to UPS address validation. But UPS has different rate structures, different error responses, and different timeout behaviors. UPS's OAuth implementation can become inconsistent during DynamoDB issues, returning 500 errors while maintaining partial session state. UPS's API returns 500 errors during DynamoDB DNS issues but maintains session state inconsistently. Your retry logic generates new tokens, but the carrier's backend still has references to the old sessions.

Traditional solutions like Redis counters or database-backed rate limiters assume you control the entire quota pool. But carriers own the limits, change them without notice, and apply them in ways that don't map cleanly to tenant boundaries.

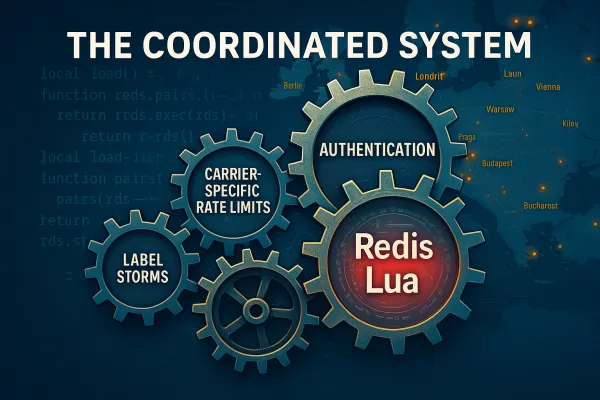

Hierarchical Multi-Tenant Rate Limiting Architecture

Effective multi-tenant carrier integration requires rate limiting at three distinct levels: tenant-level quotas that prevent any single tenant from consuming shared resources, carrier-level throttling that respects external API limits, and endpoint-specific controls that handle service-specific constraints.

Build tenant quota buckets with configurable limits. Each tenant gets allocated a portion of the carrier's total quota—USPS's 60 requests per hour might be divided as 40 requests for your largest tenant, 15 for medium tenants, and 5 reserved for small tenants or emergency overflow. Use Redis sorted sets to track per-tenant usage with sliding time windows:

local tenant_key = "rate_limit:usps:address_validation:" .. tenant_id

local window_start = current_time - 3600

redis.call("ZREMRANGEBYSCORE", tenant_key, 0, window_start)

local current_requests = redis.call("ZCARD", tenant_key)

local tenant_quota = redis.call("GET", "quota:usps:" .. tenant_id) or 5

if current_requests < tenant_quota then

redis.call("ZADD", tenant_key, current_time, request_id)

return {allowed = true, remaining = tenant_quota - current_requests - 1}

else

return {allowed = false, remaining = 0, reset_time = window_start + 3600}

end

Implement circuit breakers at the carrier level to prevent cascade failures when USPS or FedEx endpoints start rejecting requests. When the new USPS API hits rate limits or returns errors, your circuit breaker should immediately route traffic to backup services. But design circuit breakers that understand multi-tenant context—a circuit breaker that trips because of one tenant's abuse shouldn't block all tenants.

The key insight: tenant-level circuit breakers that track failure rates independently, combined with carrier-level circuit breakers that protect against systemic issues. When tenant A's requests start failing due to malformed addresses, their circuit breaker opens without affecting tenant B's traffic flow.

Distributed Counter Coordination Without Central Bottlenecks

Avoid centralized rate limiting databases that become bottlenecks during traffic spikes. Instead, use distributed counters with eventual consistency that synchronize quota usage across multiple application instances without creating single points of failure.

Redis Lua scripts provide atomic operations for quota checks, but design them to handle network partitions gracefully. When your primary Redis instance becomes unavailable, your rate limiter should fail open with local counters rather than blocking all traffic:

-- Local counter fallback during Redis unavailability

if redis_available() then

return check_distributed_quota(tenant_id, carrier, endpoint)

else

return check_local_quota(tenant_id, carrier, endpoint, fallback_limit)

end

Implement quota synchronization events that reconcile distributed state periodically. Every 60 seconds, each application instance publishes its local quota consumption to a message queue. Other instances adjust their local counters to maintain approximate global accuracy without requiring real-time synchronization.

Handle counter drift by implementing periodic reconciliation. Distributed counters accumulate errors over time—network delays, failed synchronization events, and instance restarts create discrepancies between local and global quota state. Build reconciliation processes that detect drift and redistribute quotas fairly across tenants.

Graceful Degradation When Carriers Change Rules

USPS added PKCE mandatory requirements across their APIs in early 2025. Major carriers including USPS and FedEx followed suit, making PKCE mandatory across their APIs. When carriers tighten restrictions or change authentication requirements, your rate limiting system needs graceful degradation paths that preserve tenant SLAs.

Multi-carrier routing becomes essential during quota exhaustion. When USPS hits its 60-request limit, automatically route address validation requests to alternative providers—SmartyStreets, Lob, or PostGrid—while maintaining response format consistency. Design routing logic that considers both quota availability and tenant preferences:

- Primary route: USPS (when quota available)

- Fallback route: SmartyStreets (for high-accuracy requirements)

- Emergency route: Local address database (for basic validation)

Implement tenant priority queuing during capacity constraints. When multiple tenants compete for limited carrier quota, prioritize requests based on tenant tier, SLA requirements, or request urgency. Premium tenants get guaranteed quota allocation, while standard tenants use shared pools with overflow protection.

Build cost attribution mechanisms that track quota consumption per tenant and route charges appropriately. Cargoson, along with competitors like MercuryGate and BluJay, built abstraction layers that handle the OAuth complexity, implement intelligent rate limiting queues, and provide fallback mechanisms when USPS quotas are exceeded. Similar platforms like Cargoson, nShift, EasyPost, and ShipEngine solve this by implementing chargeback systems that allocate carrier API costs based on actual usage patterns.

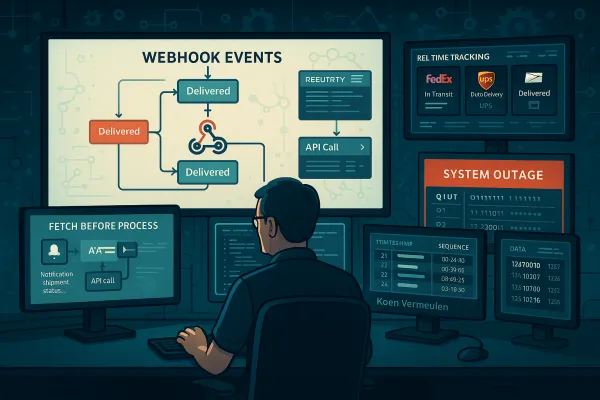

Production Monitoring That Actually Catches Problems

Generic uptime monitoring misses the nuanced failures that multi-tenant rate limiting creates. Generic monitoring tools miss the real problems when carrier APIs fail. October's cascade of carrier API failures exposed what many of us already suspected: uptime monitoring isn't enough anymore. Build observability systems that understand tenant-specific quota patterns and carrier-specific failure modes.

Track per-tenant quota utilization with time-series metrics that reveal usage patterns. Monitor quota burn rates, peak usage periods, and tenant-specific spike patterns. When tenant A consistently exhausts their quota allocation, you can proactively increase their limit before it affects their operations.

Implement carrier-specific rate limit breach detection that distinguishes between soft limits, hard limits, and temporary throttling. UPS APIs typically respond within 200-400ms for authentication requests. DHL SOAP endpoints take 800-1200ms. When these baselines shift, it indicates infrastructure changes that affect your authentication flows before they cause outright failures. Set up alerts that fire when response times increase by 50% or when error rates exceed 2% for any tenant-carrier combination.

Build synthetic monitoring that tests rate limiting behavior continuously. Send controlled test requests to each carrier API to verify quota enforcement, measure response times, and detect policy changes before they affect production traffic. Synthetic tests should simulate different tenant patterns—burst traffic, sustained usage, and mixed request types.

Cost Attribution and Chargeback Systems

Multi-tenant rate limiting requires transparent cost attribution that tracks quota consumption per tenant and allocates charges based on actual usage. Design billing systems that capture both API call costs and opportunity costs when tenants exceed their allocated quotas.

Track quota overage charges when tenants consume more than their allocated carrier quotas. If tenant A uses 45 of the 60 hourly USPS requests, charge them for 75% of the hourly USPS cost, not just their configured quota. This prevents cost leakage where high-volume tenants consume resources without proportional billing.

Implement tiered pricing that rewards efficient quota usage. Tenants who consistently stay under their allocated quotas get better per-request pricing, while tenants who frequently trigger overflow routing pay premium rates for guaranteed capacity.

Migration Strategies That Preserve SLAs

The migration deadlines are immovable. The rate limiting constraints are permanent. Testing strategies for multi-tenant rate limiting require careful coordination to avoid disrupting production traffic while validating quota enforcement across tenant boundaries.

Shadow traffic testing lets you validate rate limiting behavior without impacting production requests. Run parallel systems where rate limiting decisions are calculated but not enforced, comparing theoretical quota consumption against actual usage patterns. This reveals tenant behaviors that might trigger unexpected quota exhaustion in production.

Run parallel systems where your application calls both SOAP and REST endpoints simultaneously, comparing results to identify discrepancies before the June deadline. Enterprise TMS platforms like Cargoson, Manhattan Associates, and SAP TM have already implemented FedEx REST endpoints and are managing dual-API operations for clients during the transition period.

Rolling migration patterns maintain tenant SLAs during the transition from legacy carrier APIs to rate-limited modern endpoints. Migrate tenants in waves—start with low-volume tenants to validate rate limiting behavior, then migrate medium-volume tenants while monitoring quota consumption patterns, finally migrate high-volume tenants with dedicated quota allocations.

Feature flags control rate limiting enforcement per tenant, allowing gradual rollout of quota restrictions. Start with monitoring-only mode to establish baseline usage patterns, then enable soft limits that log violations without blocking requests, finally enforce hard limits with proper fallback routing configured.

Incident response procedures for quota exhaustion should include automatic tenant notification, escalation paths for quota increases, and emergency overflow routing. When tenant quotas are exhausted, your system should automatically notify tenant administrators, provide self-service quota increase options, and route urgent requests through backup carriers while maintaining audit trails.

The companies that survive 2026's migration crisis won't be the ones with perfect technical execution. They'll be the ones who recognized that carrier integrations are infrastructure, not features, and invested accordingly. Your choice is whether to build the resilience your enterprise needs, or let carrier API changes control your shipping operations.

The 2026 carrier API migration wave exposed a fundamental truth: rate limiting isn't a technical detail you can bolt onto existing systems. It's a core architectural constraint that affects every aspect of multi-tenant carrier integration. Build systems that embrace these constraints from the ground up, and your tenants will thank you when the next wave of carrier changes arrives.